Measuring Zero Trust: The Dashboard Your Board Wants to See

Published

By Day 90, someone on the board will ask how the Zero Trust programme is performing. If the answer is “we deployed MFA” or “we have 14 dashboards in Splunk,” the programme has already lost the Year-Two budget conversation. 70% of boards now include cybersecurity in quarterly reporting, and the NACD Director’s Handbook on Cyber Risk Oversight explicitly asks directors to quantify financial exposure, not technical posture. The dashboard that gets you funded is the one that speaks in dollars and days, not percentages and pillars.

This is an Operations chapter of the 90-Day Zero Trust Playbook. It turns the technical work from Phases 1–3 into the language the steering committee and the board need.

Key Takeaways

- 70% of boards now review cybersecurity quarterly; NACD’s Director’s Handbook puts financial-exposure quantification as the director’s obligation, not a nice-to-have

- IBM’s 2025 Cost of a Data Breach Report pins average breach cost at $4.44M with a 241-day lifecycle; breaches contained in <200 days cost $3.87M vs. $5.01M for >200 days — the containment delta is the story

- Mature Zero Trust programmes achieve 40–50% faster containment versus non-Zero-Trust peers (Forrester)

- Organisations deploying AI in security ops report 68–80 day lifecycle reduction and $1.9M per-breach savings (IBM 2025) — a quantifiable number to put into the ROI model

- The dashboard has two panels: leading indicators (controls coverage, maturity) that move weekly, and lagging indicators (MTTD, MTTC, incidents) that move quarterly. Board cares mostly about the lagging panel translated to dollars

Leading Indicators (Weekly)

Leading indicators tell you whether the controls are in place. They move in weeks and should reach their targets by Day 90.

- MFA adoption % — target 100% of in-scope users; a stalled denominator is the early warning sign.

- SSO coverage % — federated applications as a percentage of the T0/T1 application inventory.

- Device compliance % — enrolled, policy-compliant devices as a percentage of the total device population. Track corporate and BYOD separately; expect different curves.

- Conditional access coverage % — T0/T1 applications gated by Conditional Access in enforcement mode (not shadow).

- Segmentation coverage % — T0/T1 applications behind micro-segmentation policies in enforcement mode. Target 60% by Day 90; tail continues into Year Two.

- Service account hygiene % — non-human identities under PAM with rotation enabled, as a percentage of discovered service accounts. Usually the most painful number on the dashboard — celebrate the trend, not the absolute.

- CI/CD OIDC adoption % — production-deploying workflows using OIDC federation rather than static credentials.

Every one of these is a one-gauge, one-number widget. The dashboard is boring on purpose — the goal is that the CISO can read it in 60 seconds and the steering committee in five minutes.

Lagging Indicators (Quarterly)

Lagging indicators tell you whether the controls are working. They move in quarters and are what the board cares about.

- MTTD (mean time to detect) — IBM 2025 puts industry average at 158 days; SANS reports top-quartile SOCs under 60 minutes. Aim for quarter-over-quarter improvement, not a specific absolute.

- MTTC (mean time to contain) — more actionable than MTTD for Zero Trust because segmentation directly shortens it. Forrester’s wave data puts mature ZTA programmes at 40–50% faster containment.

- Lateral movement incidents — count per quarter. Should trend to near-zero as segmentation matures. One incident six months in is not a failure; an increasing trend is.

- Exception count and age distribution — from Exception Management. The >90-day bucket is the early warning the programme is drifting.

- Helpdesk volume attributable to access controls — spikes during enforcement rollouts are normal (expect 30–40% at the peak), but a persistently elevated baseline is a sign the controls are too tight for legitimate work.

- Cyber insurance premium change year-over-year — see the Budget Guide. A concrete dollar number the CFO understands without translation.

The CISA Maturity Overlay

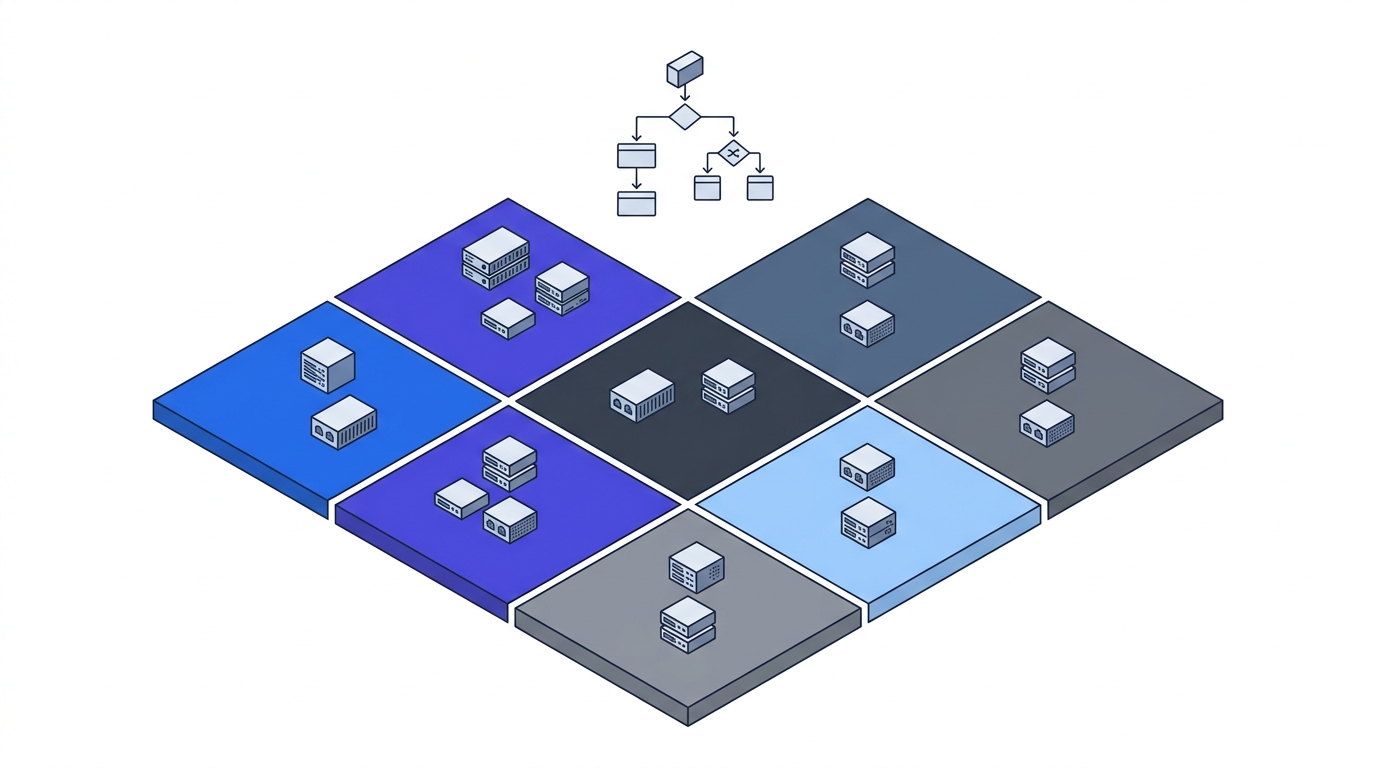

The CISA Zero Trust Maturity Model scores across five pillars (Identity, Devices, Networks, Applications/Workloads, Data) × four levels (Traditional, Initial, Advanced, Optimal). Plot the current state as a radar chart, overlay the Day-90 projection, and add a Year-Two target. This is the single most board-friendly artifact in the playbook because it makes multi-quarter progress visible on one slide.

Realistic 90-day targets for a programme starting at Traditional:

- Identity: Traditional → Advanced (the MFA + SSO + Conditional Access + service-account work shifts this two levels)

- Devices: Traditional → Initial (MDM enrolment live, compliance policies in place)

- Networks: Traditional → Initial (one segment in enforcement mode, baseline complete)

- Applications/Workloads: Traditional → Traditional (no realistic 90-day movement for most orgs)

- Data: Traditional → Traditional (Year-Two work)

A radar chart that shows movement on Identity, Devices, and Networks, with Applications and Data flat, is an honest 90-day story. A radar chart that shows all five pillars at Advanced is a vendor deck.

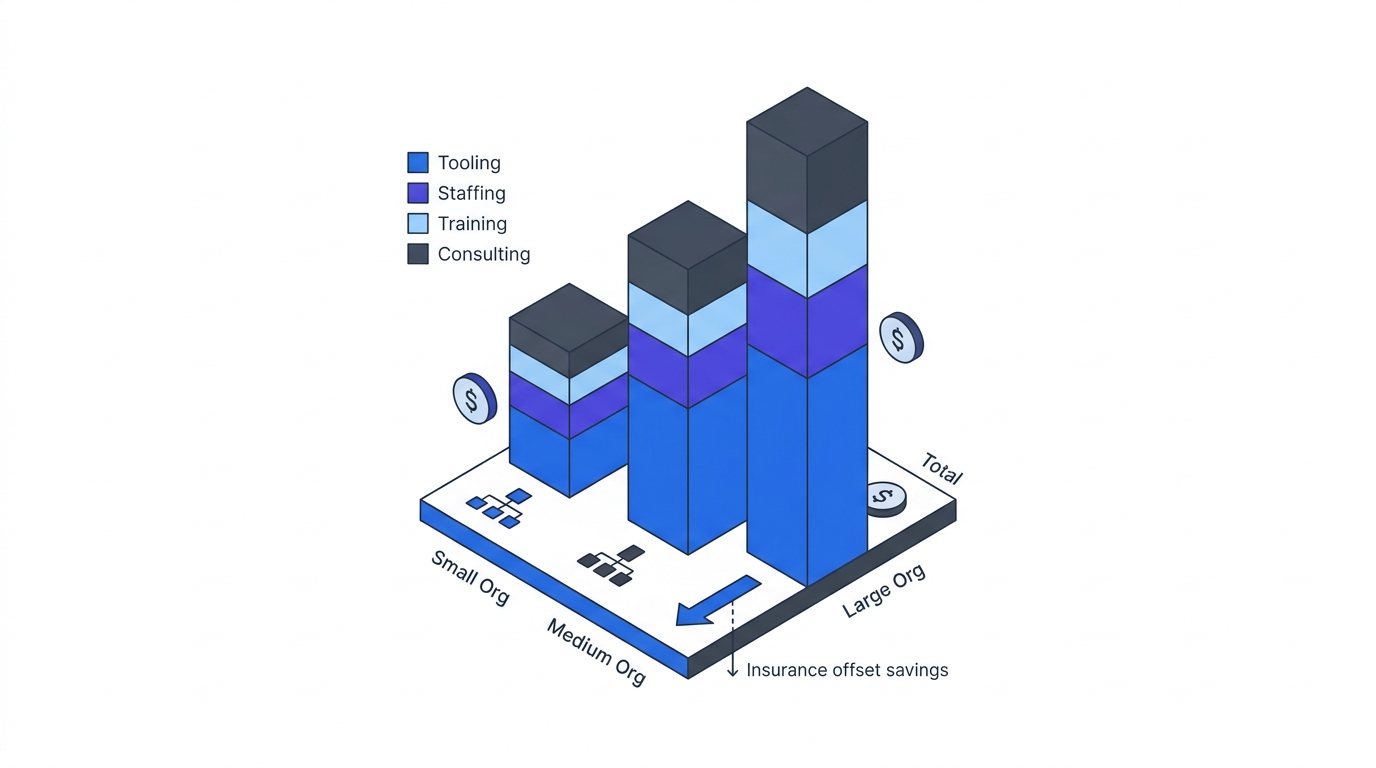

Translating to Dollars (The Board Framing)

The NACD Director’s Handbook is explicit: directors must be able to articulate the organisation’s financial exposure to cyber risk. The controls-coverage dashboard is necessary but not sufficient. The translation is:

“We moved privileged-access-exploitation risk from [baseline probability] to [post-control probability]. Against an average breach cost of $4.44M and the industry-median 241-day lifecycle, that translates to roughly $X of annual risk reduction. Mature programmes achieve 40–50% faster containment, which at our scale saves $Y per incident.”

Filling in X and Y requires a light actuarial model — the FAIR (Factor Analysis of Information Risk) framework is the most common. A rough estimate is better than no estimate; the board prefers “$2.8M–$4.1M in risk-adjusted annual exposure reduction” to “we improved our MFA coverage to 97%.”

AI in the ROI Model

IBM’s 2025 data reports organisations deploying AI in security operations saw breach lifecycles shorten by 68–80 days and per-breach cost reduce by roughly $1.9M. If your Zero Trust programme includes anomaly-detection ML, behaviour-based Conditional Access, or automated containment, that is a line item in the ROI model — not as a vendor benefit statement, but as a quantified delta from a published benchmark.

The One-Page Board Summary

For the quarterly board review, the Zero Trust programme gets one page:

- CISA maturity radar — current vs. target.

- Three leading indicators — MFA coverage, segmentation coverage, CI/CD OIDC adoption. Arrows showing quarter trend.

- Two lagging indicators — MTTC quarter-over-quarter, exception count trend.

- Financial framing — one sentence: “Risk-adjusted exposure reduction this quarter: $X–$Y.”

- Ask — what the programme needs from the board this quarter (budget, political cover, an exception closed).

Everything else lives in appendices the board can read if they want. The one page is what they will actually read.

What the Artifact Delivers

By the end of this chapter, you should have:

- Weekly leading-indicator dashboard, visible to the steering committee

- Quarterly lagging-indicator report with dollar-denominated framing

- CISA maturity radar updated quarterly with honest trajectory

- A one-page board summary on a repeatable template

- A light FAIR or equivalent model that lets you say $X–$Y of risk reduction with a straight face

Return to the 90-Day Playbook hub for the final cross-cutting chapter: budgeting.